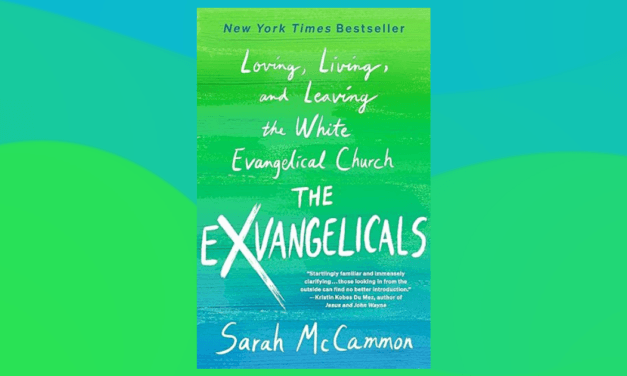

This article first appeared in the Christian Research Journal volume 23, number 1 (2001). For more information about the Christian Research Journal, click here.

America’s love affair with technology is rooted in a type of secular Manifest Destiny, which sees any innovation as positively providential. Few of us realize that for every expressed purpose a technology is designed to serve, here are always a number of unintended consequences accompanying it. History is full of examples. The automobile awarded us with the commute and family motor vacation, but it also assisted in severing community and family ties. The atomic bomb saved millions of lives, but it was a technological bargain with the devil.

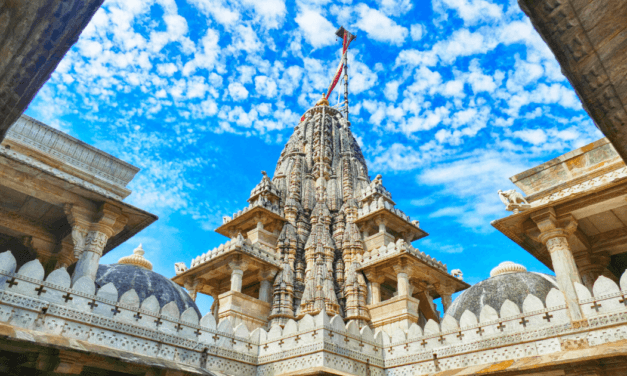

One of the most significant challenges that we should concern us is what repercussions will transpire as America shifts from a print-oriented culture to an image-oriented one. Early on, Americans had little difficulty accommodating themselves to the presence of the television set in their homes. Within a relatively short time, our new electronic media have already changed the face of American politics and religious worship. It was a logos-centered culture that helped produce our Protestant heritage, as well as American democracy itself, what will be the birth child of the continuing devaluation of the written word? The technological shift from the printed word to the visual image may not be the panacea for which we have been wishing. Rather than going forward, we actually might be going backward. In short, Tomorrowland has the potential to become a triumph for the older system — pagan idolatry.

In the early 1960s, Orlando, Florida, was a crossroads community of citrus groves, farmland, and swamps. Back then, one could buy an acre in Orange County for about $200. So, when Walt Disney bought 46 square miles of land, the citizens of Orlando must have mused how life would be different under the shadow of Mickey Mouse. The dreamer from Hollywood, however, was not content to build another theme park; he was ready to try his hand at creating an elaborate city of the future.

Upon the Florida flatland, Walt Disney envisioned a “City of Tomorrow,” where dirt, disease, and poverty would be nonexistent; a nuclear-powered metropolis, controlling its own climate and recycling its own waste; a radiant web of white pods connected by silent transit systems. He quietly asserted that the city would be paid for from the profits of his new Disney World.1 Only a handful knew the scope of Disney’s City of Tomorrow and how it came to dwarf all other projects on his drawing board.

Walt Disney never saw his utopia because he died in 1966, just after construction began in Orlando. Disney’s successors, more concerned with the bottom line, settled for what became the present-day EPCOT. Heirs of the Disney dynasty thought it more prudent to follow the tried formula of entertaining crowds with fantasy rides. The City of Tomorrow evolved into an expanded entertainment haven. Other parks followed. Today, metro Orlando receives almost 50 million visitors a year. Almost all of us, at one time or another, have made a pilgrimage to one of central Florida’s amusement Meccas.

The development of Orlando allegorizes the changes taking place in our own culture. Like Walt Disney’s successors, we also have thrown off our modern visions of utopia for an easier, more attainable “fun land.” Orlando serves as an emblematic gauge of an image-driven public, dependent on movies, television, and video games.

The Age of Image in a Technowonderland

It does not take a social scientist to tell us that our culture has an insatiable appetite for visual stimulation. Within the last several years, Disney and similar companies have devoted their energies to creating virtual reality rides, even procuring NASA rocket scientists to design image-enhanced simulators. This is not to say America has given up on space exploration, only that there seems to be more profit in applying our laser technology toward amusing consumers. In promoting Orlando’s new Island of Adventure, Steven Spielberg predicted that “virtual reality will live up to its name for the first time in the next ten years…because you’ll be surrounded by images.…You’ll feel the breezes. You’ll smell the smells.…Yet when you stand back and turn on a light to look at where you’ve been standing, you’re just in a dark room with a helmet on.”2 Spielberg claims that today’s generation demands reality in its high-tech recreations. Entertainment engineers know that the level of electrosophistication possessed by young media connoisseurs is so keen that the thrill once provided by a wooden roller coaster is now antiquated.

Advances in technology have augmented virtual reality’s popular appeal, and it is with technology that America has had an ongoing love affair. Historically, the affection Americans have bestowed upon technological advancement has been rooted in a progressive spirit — a type of secular Manifest Destiny that sees any innovation as providential. Technology, progress, and the future are all synonyms in contemporary American culture. Technology has made us live longer and more comfortable lives and has also made us rich. Virtual reality is deemed good because it represents progress. The same can be said for the invention of television, the computer, and the washing machine. To many Americans, to deny this is anathema.

A celebration of technological progress is enshrined at one particular attraction in Disney World’s Tomorrowland: General Electric’s “Carousel of Progress.” The ride is a cute summary of America’s technological love fest. Touring Tomorrowland is like walking around in the future wearing Jules Verne spectacles. Perhaps the first to describe ecotourism, Verne would have been delighted with Disney World. This is true not only because Disney made film and ride versions of 20,000 Leagues under the Sea, but also because Jules Verne shared Walt Disney’s obsession with technology and the future. The Carousel of Progress traces 100 years of progress as tourists relax in a sit-down revolving theater. In the background we hear voices singing, “There’s a great big beautiful tomorrow / Shinning at the end of every day.”

The show focuses on a family’s history through time, displaying all the conveniences made possible by innovations in electricity. There is a father, mother, daughter, son, grandfather, grandmother, and a silly cousin named Orville. The ride scoots one about from the past into the future with a historical panorama of our most remarkable household inventions. What is so intriguing about the show is that every family in every generation looks the same — always laughing and enjoying each other’s company. Only the inventions have been changed. The message we walk away with is that technology is neutral or positive and only serves to make us happier.

Technology Is Not Neutral

Contrary to popular thinking, technology is not neutral. It has the propensity to change our beliefs and behavior. For example, any historian will affirm that the printing press hurled Europe out of the Dark Ages into the Protestant Reformation. When Johann Gutenberg introduced movable type in the fifteenth century, a whole new world opened up — liberty, freedom, discovery, and democracy. The Bible became available to the people. Martin Luther called the invention of the printing press the “supreme act of grace by which the gospel can be driven forward.” Europe was set on fire. People were thinking, arguing, creating, and reflecting. The printing press allowed ideas to be put in black and white so anyone could analyze or criticize them. To a great extent, America was born out of a print-oriented culture.

What often escapes our notice in public discussions is how new technologies create unintended effects. In this sense, technology is a mixed blessing to societies, whether the machines pertain to warfare, transportation, or the communications media. What Jules Verne knew, and what Walt Disney might not have cared to know, was how the future could have a dark side. Verne understood the biases of technology, that technology had the capacity to change us or even destroy us. In the Disney movie, because Captain Nemo feared the Nautilus would fall into the wrong hands, he blew it up along with the island that sheltered its mysterious secrets. Nemo’s periscope might not have been able to rotate all the way around, but he was not too far off the deep end to fathom the depths of human depravity. Nemo would have agreed with King Solomon, who wrote, “Lo, this only have I found, that God hath made man upright: but they have sought out many inventions.” (Although Leonardo da Vinci contrived a submarine 300 years before the birth of Jules Verne, it is an interesting fact of history that the great inventor suppressed it because he felt it was too satanic to be placed in the hands of unregenerate men.3)

Glitzy machines have a way of mesmerizing us so we do not think about the unintended consequences they create. Our situation today is very much like a train we have all boarded with enormous enthusiasm. With great splendor the train embarks from the station while we cheer, “Onward! Forward!” The train picks up speed, and we all shout, “Progress! Prosperity!” Faster and faster the wheels turn. With tremendous velocity the train races down the track. “Faster! Faster!” we yell. But we don’t even know where the train is taking us! We don’t know where it is going. It is a mystery train. Jules Verne and Walt Disney were able to make fantasizing about the future a commodity, which we have ingested right on up through the George Jetson cartoons and endless StarTrek spin-offs. For a hundred years we have anticipated the twenty-first century in visions of rockets, gadgets, and push buttons. Now the new millennium has arrived. The future is here.

History’s Testimony to the Bias of Technology

Technology’s inherent biases can be detected in two particular inventions of the twentieth century: atomic weaponry and the automobile. “Nuclear fission is now theoretically possible,” wrote Albert Einstein in a 1939 letter to President Franklin Roosevelt, explaining the power unleashed when the nucleus of a uranium atom is split. Fearing the Germans were also close to the same discovery, Roosevelt authorized the Manhattan Project to develop an atomic weapon. Five years later, the bomb was ready. The decision to unleash the bomb on Japanese citizenry followed two other possible alternatives. One option involved persuading the Japanese into surrendering by dropping it in some unpopulated wooded area. The idea was that the Japanese would run over to the site, see the big hole, and give up. But President Truman’s advisors preferred a more tangible target. A second alternative was to invade Japan, but the casualties for such a plan were estimated to be over the million mark.

Some scientists on the Manhattan Project had doubts about the “genie of technological destruction their work had uncorked.”4 James Franck, chairman of a committee on the bomb’s “Social and Political Implications,” warned of the problems of international control and the danger of precipitating an arms race. His report claimed the Japanese war was already won and Japan was on the brink of being starved into surrender. Truman’s advisors ignored Franck’s report, urging the President to drop the bomb on a large city with a legitimate military target. Hiroshima had an army base and a munitions factory. Truman was confident. One historian notes that an “irreversible momentum” to use the bomb superseded all other alternatives. Truman felt the bomb “would save many times the number of lives, both American and Japanese, than it would cost.”5 One of Truman’s generals later observed, “He was like a little boy on a toboggan.”6

Shortly after eating their breakfast on 6 August 1945, the inhabitants of Hiroshima noticed an object waft in the sky. It probably reminded them of an episode a few days earlier when a flock of leaflets flittered to the ground warning of an imminent attack on their city. The message could not have been too surprising. They knew a war was on and the enemy was winning. The bulk that pulled the sail to earth that morning weighed four tons and cost four million dollars to develop. The last memory held by curious gazers was a solitary flash of light. Once detonated, the light rippled from the center of the city, puffing houses, bridges, and factories to dust. The explosion killed one-third of Hiroshima’s 300,000 residents instantly. Another bomb killed 80,000 in the same manner three days later at Nagasaki. Over the next five years, 500,000 more would die from the effects of radiation exposure. Today, nuclear weapons are 40 times more powerful than the Hiroshima bomb.

Truman’s decision to use the bomb probably saved millions of lives. The debate continues; but it certainly was a bargain with the devil. The tradeoff for having developed a machine of mass destruction yesterday is living with the threat of being blown to little bits tomorrow. Technoenthusiasts are incessantly expounding what a new machine can do for us, but little deliberation is ever afforded as to what a new machine will do to us. Technological advancement always comes with a price, of which history is more than willing to disgorge examples.

Henry Ford once confessed, “I don’t know anything about history.”7 The world’s greatest industrialist would go on the record pronouncing to the world, “History is more or less bunk.” Perhaps had Ford known his history a bit better, he would have foreseen the social effects of the automobile. Although the automobile awarded us with the commute and the family motor vacation, it also assisted in severing community and family ties like no other invention of its time.

The famous Middletown study, which focused on a typical American community in the 1920s, documents the social effects of the automobile. The researchers selected the town of Muncie, Indiana, termed “Middletown,” because they viewed it to be the closest representation possible of contemporary American life.8 Halfway into the 1920s, it could be said that the “horse culture” in Middletown had trotted off into the sunset. The horse fountain at the Courthouse square dried up, and possessing an automobile was deemed a necessity for normal living. The car soon became a way for youth to escape authority. It allowed young couples to pair off. It was, in effect, a portable living room for eating, drinking, smoking, gossip, and sex.9 Of 30 girls brought before the Middletown juvenile court charged with “sex crimes” within a given year, 19 of them were listed as having committed the offences in an automobile.10 When Henry Ford formulated the assembly line, he probably did not envision himself as a villain to virginity. Nevertheless, in making the automobile a commodity, he moved courtship from the parlor to the backseat.

It is not the purpose here to suggest that we stop developing instruments of war or permanently park our cars. These are not the rants of a technophobe; yet, few of us in the information age ever stop to consider this truth: For every expressed purpose a technology is designed to serve, there are always a number of unintended consequences accompanying it. Tomorrowland poses a host of challenges that gadget masters are not likely to point out. One of the most significant challenges that should concern us is what repercussions will transpire as America shifts from a print-oriented culture to an image-oriented one.

The Advent of Television

The first American commercial TV broadcast occurred at the New York World’s Fair on 30 April 1939. Television was the technology for the next generation and was showcased in an exposition offering a gleaming glimpse of the future. The fair’s optimistic theme was “Building the World of Tomorrow,” the same secular gospel of a bright future through technology Walt Disney later embraced as a major element in his own theme parks.

At the physical center of the fair stood a towering three-sided obelisk, and next to it, an immense white sphere. Facing the dominating structures, President Roosevelt announced, “I hereby dedicate the World’s Fair, the New York World’s Fair of 1939, and I declare it open to all mankind!”11 The President’s image was dispersed from aerials atop the Empire State Building in a live broadcast by NBC. A week earlier, David Sarnoff dedicated the RCA building in television’s first news broadcast. His words were prophetic: “It is with a feeling of humbleness that I come to this moment of announcing the birth in this country of a new art so important in its implications that it is bound to affect all society.”12

Yes, it was bound to do so, which, no doubt, was the underlying basis of Sarnoff’s humility. Also reflecting on the impact of television at the time was the fair’s science director, Gerald Wendt, who wrote that “democracy, under the influence of television, is likely to pay inordinate attention to the performer and interpreter rather than to the planner and thinker.”13 Wendt apparently understood that even if television were utilized for the “public good,” thinking could never be a performing art. But these kinds of debates would have to be postponed. Life magazine observed how the fair “opened with happy hopes of the World of Tomorrow and closed amid war and crisis.”14 Four months after television’s first commercial broadcast, Hitler invaded Poland.

The 1950s have been coined the “Golden Age of Television,” but since the days of I Love Lucy, our tube time has roughly doubled. Early on, Americans had little difficulty accommodating themselves to the presence of the television set in their homes. It wasn’t too long after television’s debut that the set prodded its way into the living room, replacing such older focal points as the fireplace and piano. Current estimates confirm the television screen is flickering about 40 hours a week in the average household. Most homes now have at least three television sets, and 54 percent of children have a set in their bedroom.15

There is a big difference between processing information on a printed page and assimilating data conveyed through a series of moving pictures. Images have a way of evoking an emotional response. Pictures are effective at pushing rational discourse — linear logic — into the background. The chief aim of television is to sell products and entertain audiences. Generally speaking, television seeks to provide emotional gratification. As a visual medium, its programming is designed to be amusing. Substance gives way to sounds and sights. Stirring feelings obscure hard facts. Dramatic images drown out important issues. Emotion replaces reason.

Dumbing Down for the Twenty-First Century

In a national literacy study issued by the U.S. Department of Education in 1993, almost half of the adults performed within the lowest levels of literacy proficiency.16 It is quite remarkable that although school is compulsory for all children in this country up into the high school years, a large chunk of the population is functionally illiterate. This means that almost a majority of us have difficulty “using printed and written information to function in society, to achieve one’s goals, and to develop one’s knowledge and potential.”17 The first half of the 1990s saw a dramatic drop in reading for pleasure. In 1991, 75 percent of adults claimed to read for pleasure in the course of a week. By 1994, the figure had fallen to 53 percent.18 The Barna Research Group reports that more people are using the library for alternative media (compact discs, audio cassettes, videos) rather than for checking out books and that a marked decline in reading is one of the fastest-changing behaviors within the past two decades.19

Signs that we are on the threshold of a bold new image-oriented era are evidenced on today’s political terrain. Being a professional wrestler has recently been shown to be an effective asset for capturing the attention of the electorate. For all practical purposes, it worked for Jesse “The Body” Ventura, who promised that if elected governor of Minnesota, he would rappel from a helicopter onto the statehouse lawn. (I have always categorized a professional wrestler as an individual who shouts and spits red-faced threats into a television camera after a round of grunting and gyrating playacting.) So, how in the world did the good people of Minnesota elect Jesse Ventura as their governor? Did they temporarily lose their minds? I suggest that it is an image-saturated culture that has made Jesse Ventura possible — a person who is not an anomaly but a prototype of what is to come.

Traditionally, distinguished persons of achievement constituted the candidate pool for political office. Now we must add celebrities. In the twentieth century, the hero, who in centuries past distinguished himself with noteworthy achievements, was replaced by the celebrity, who is distinguished solely by his image.20 Something changed in American political life ever since the Nixon-Kennedy televised debates. Some say Nixon lost the presidential election to Kennedy because he was not telegenic enough. Quite possibly, that is why Abraham Lincoln could not be elected president were he running today.

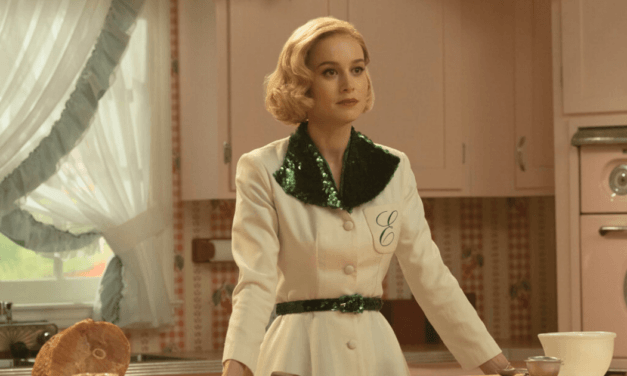

While our political landscape is in flux, Christianity seems to be experiencing a type of remodeling, especially within its own sanctuaries. Generation Xers are hungering for a new style of worship that bares a closer resemblance to MTV than to their parents’ old-time religion. In an opening address at the 1999 Southern Baptist Convention, Paige Patterson urged his audience to be careful of “twelve-minute sermonettes generated by the ‘felt needs’ of an assembled cast of postmodern listeners augmented by drama and multiple repetitions of touchy-touchy, feely-feely music.”21

The president of the nation’s largest Protestant denomination was alluding to a new style of worship, where congregants “come as they are,” whether it be in jeans, shorts, or T-shirts. (One church in Virginia Beach had to alter this policy when soon after a waterfront sign was erected, visitors started showing up in their bathing suits.) Drama, dance, video clips, rock ‘n’ roll, TV talk show formats, and eating in the services are just some of the elements of the growing “worship renewal movement” where people attend church much in the same manner as they watch Who Wants to Be a Millionaire? A critical examination would indicate that the movement is a by-product of a culture that has been weaned on television.

Culture critic Neil Postman begins his book, Amusing Ourselves to Death, by contrasting two fictional antiutopias — George Orwell’s 1984 and Aldous Huxley’s Brave New World. Orwell feared that we would be overcome by a tyranny that would take away our freedoms — Big Brother looking over our shoulder. Huxley feared that we would be ruined by what we came to love. In 1984 books are banned. In Brave New World no one wants to read a book. In one novel, Big Brother suffocates civilization with a forceful hand. In the other novel, civilization comes to adore its technologies so much that it loses the capacity to think, preferring rather to be entertained. Postman says that when we become “distracted by trivia, when cultural life is redefined as a perpetual round of entertainments, when serious public conversation becomes a form of babytalk, when, in short, a people become an audience and their public business a vaudeville act, then a nation finds itself at risk; culture-death is a clear possibility.”22

Here we are now, hitchhiking on the information highway. The computer has ushered us into the information age. In a decade or two the television and the computer will probably merge into one technology. It is highly likely that “text” will be deemphasized in whatever form electronic media take in the future. This is altogether frightening; for if it was a logos-centered culture that helped produce our Protestant heritage, as well as American democracy, what will be the birth child of the continuing devaluation of the written word? An image-soaked culture thwarts Jefferson’s notion of an informed populace.

There now seems to be enough consensus to acknowledge that the emergence of postmodernism is actually the by-product of two tandem occurrences: the rapid rise of the image and the obvious consequences of moral relativism. Postmodernism is a turning from rationality, and at the same time, an embracing of spectacle. In accordance to this thought, we are all in danger of becoming pagans. Not just pagans, but mindless and defenseless pagans who would prefer to have someone tell us how to think and act.

We should not forget that pagan leaders from Ramses II to Nebuchadnezzar to Antiochus IV to Augustus used the Image to bind their populaces. Pagan idolatry has always been a type of “fascism of the eye.” 23 The dominance of the Image over the Word was a basic tenet of fascist theory in Hitler’s day. 24 The possibility of tyranny exists for us because we have lost the rhetorical and mental defenses to arm ourselves against demagoguery. Our children are not being equipped to spot counterfeit leaders wh9o would lead us astray with an overabundance of pathos.

Ignoring history’s warnings of technology’s tendency to change us, we have blindly boarded a glitzy train with a one-way ticket to digit city. Like Pinocchio, we are being hoodwinked into making a journey to Pleasure Island, and we will, in all likelihood, share the same fate of those laughing donkeys.

Arthur W. Hunt III holds a Ph.D. in Communication and is a Christian school educator. He is currently working on a book entitled, The Vanishing Word: The Triumph of Idolatry in Our Age.

- Leonard Mosley, Disney’s World (Chelsea, MI: Scarborough House, 1990), 290.

- Dennis McCafferty, “New Frontiers: Changing the Way We Play,” USA WEEKEND, 28-30 May 1999, 4.

- Lewis Mumford, Technics and Civilization (New York: Harcourt, Brace and World, 1934), 85.

- John Costello, The Pacific War (New York: Rawson, Wade, 1981), 581.

- Ibid., 582.

- Peter Wyden, Day One: Before Hiroshima and After (New York: Simon and Schuster, 1984), 135.

- Roger Burlingame, Henry Ford: A Great Life in Brief (New York: Knopf, 1966), 3.

- Robert S. Lynd and Helen Merrell Lynd, Middletown: A Study in American Culture (New York: Harcourt, Brace and World, 1929), 7.

- James Lincoln Collier, The Rise of Selfishness in America (New York: Oxford University Press, 1991), 151.

- Lynd and Lynd, 258.

- David Gelernter, 1939: The Lost World of the Fair (New York: The Free Press, 1995), 36-37.

- Ibid., 167-68.

- Quoted in ibid., 167.

- Quoted in ibid., 354.

- Karl Zinsmeister, “Wasteland: How Today’s Trash Television Harms America,” The American Enterprise, March–April 1999, 30.

- “Adult Literacy in America,” National Center for Educational Statistics, U.S. Department of Education, 1993.

- This is the definition for literacy used in ibid.

- George Barna, Virtual America (Ventura, CA: Regal Books, 1994), 34.

- Ibid., 34-35.

- See Daniel Boorstin, “From Hero to Celebrity: The Human Pseudo-Event,” in The Image (New York: Harper and Row, Colophon Books, 1961), 45-76.

- Julia Lieblich for the Associated Press, “Southern Baptists Urged to Avoid New Methods of Worship,” The Clarksville (Tennessee) Leaf-Chronicle, 16 June 1999, B3.

- Neil Postman, Amusing Ourselves to Death: Public Discourse in the Age of Show Business (New York: Penguin, 1985), 155-56.

- Camille Pagila, Sexual Personae: Art and Decadence from Nefertiti to Emily Dickinson (New Haven, CT: Yale University Press, 1990), 139.

- Gene E. Veith, Jr., Modern Fascism: Liquidating the Judeo-Christian Worldview (St. Louis, MO: Concordia Publishing House, 1993), 146.